I'm a Seattle-based motion designer and video producer, and for the last 15 plus years I've mostly been in the business of turning complicated ideas into stories people can actually follow. That has meant a lot of different roles over time, from producing hundreds of videos at Alcon to shaping launch films and product stories on Microsoft's Visual Design team.

No matter the project, I usually start in the same place. What is the story, who is it for, and what part actually matters to the person watching it? That question has carried through everything from product demos and sizzle reels to nonprofit films and personal AI experiments. Lately I've also been using AI tools to speed up parts of the process, not to flatten the work, but to make more room for the part that still matters most, the human one.

VisD - UI Reel

UI Director, Motion Designer, Producer & Editor

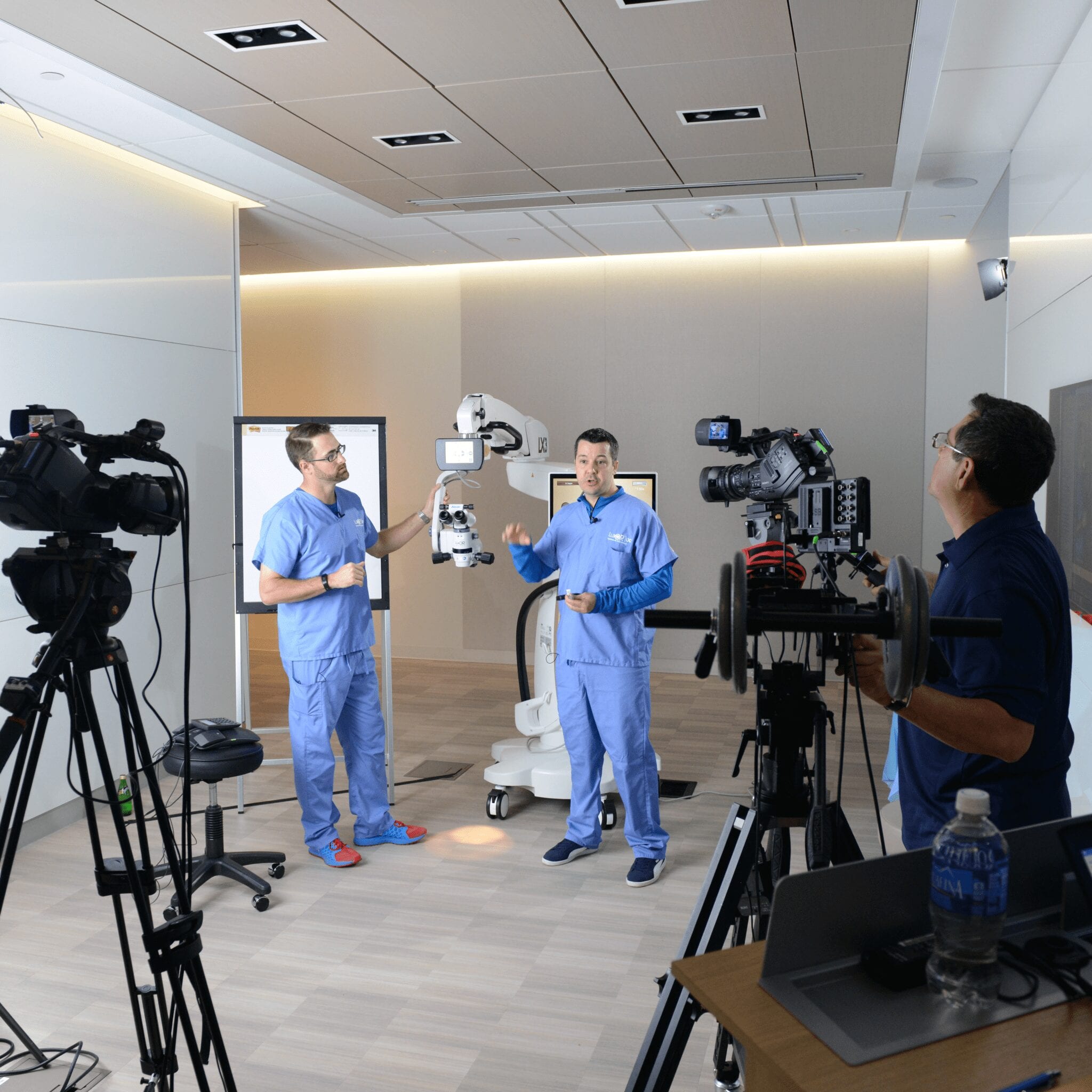

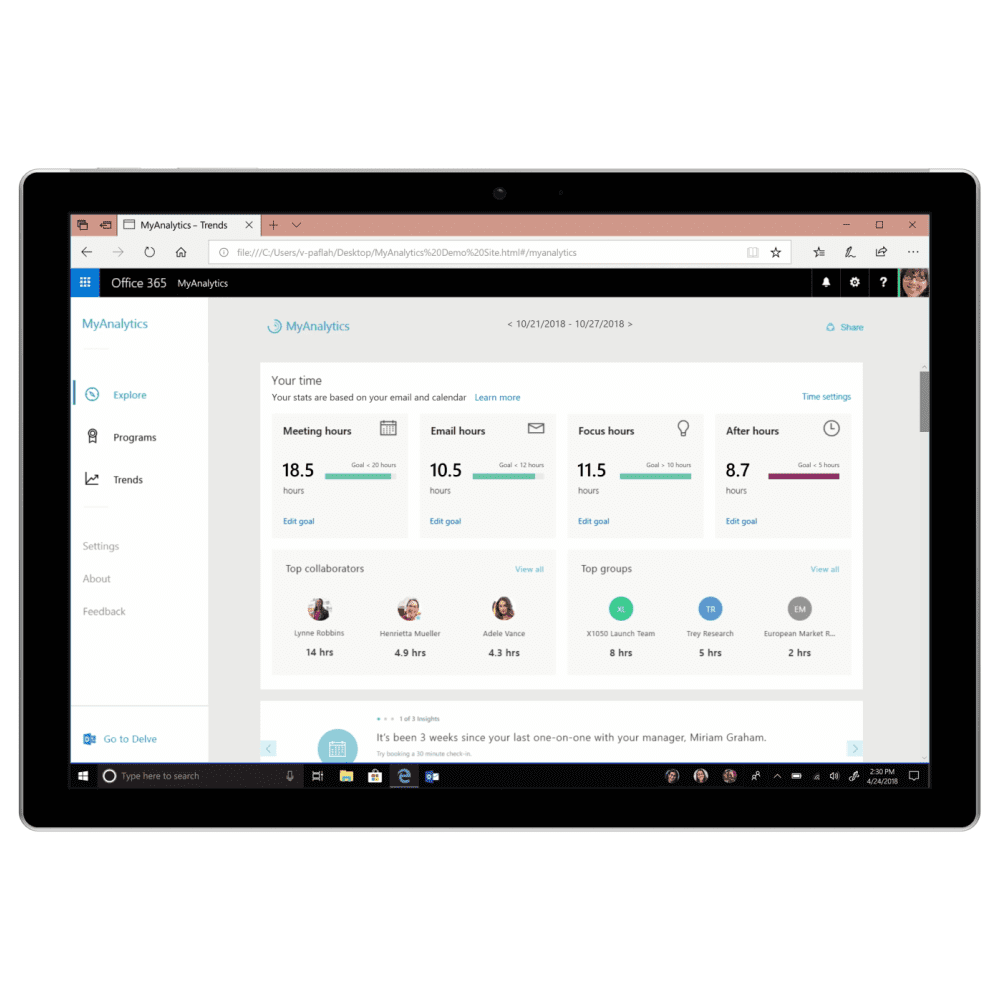

On Microsoft's Visual Design team, I worked on videos for Windows 11, Surface, Copilot+ PCs, and Recall. My job was owning the on-device demos and making sure the software moments felt clear, cinematic, and worth paying attention to.

Sometimes I was building those sequences myself. Other times I was leading a small team. Either way, I was responsible for how the screens looked, how they moved, and which product moments actually made it into the story.

My approach I try to put story first. That sounds obvious, but it is easy for product videos to slide into a feature tour and forget there is a person on the other end of the screen.

I would rather frame the work around the user's experience and then build the visuals to support that. Along the way, I've also built a library of After Effects presets and scripts to make the process faster without flattening the storytelling.

Recall - Copilot+ PCs

Associate Creative Director & UI Production Director

For the Recall launch, I led the visualization work from concept through stage delivery. After months of planning, the project compressed into a final two-week sprint where everything had to come together fast.

Because the piece was built for a 50-foot event screen, I spent a lot of time tuning scale, motion, and pacing so the UI would still read clearly in the room and on camera. I also built on earlier Recall demos from the Surface and Copilot+ PC videos, pushing those scenarios further so they felt strong enough for a live reveal.

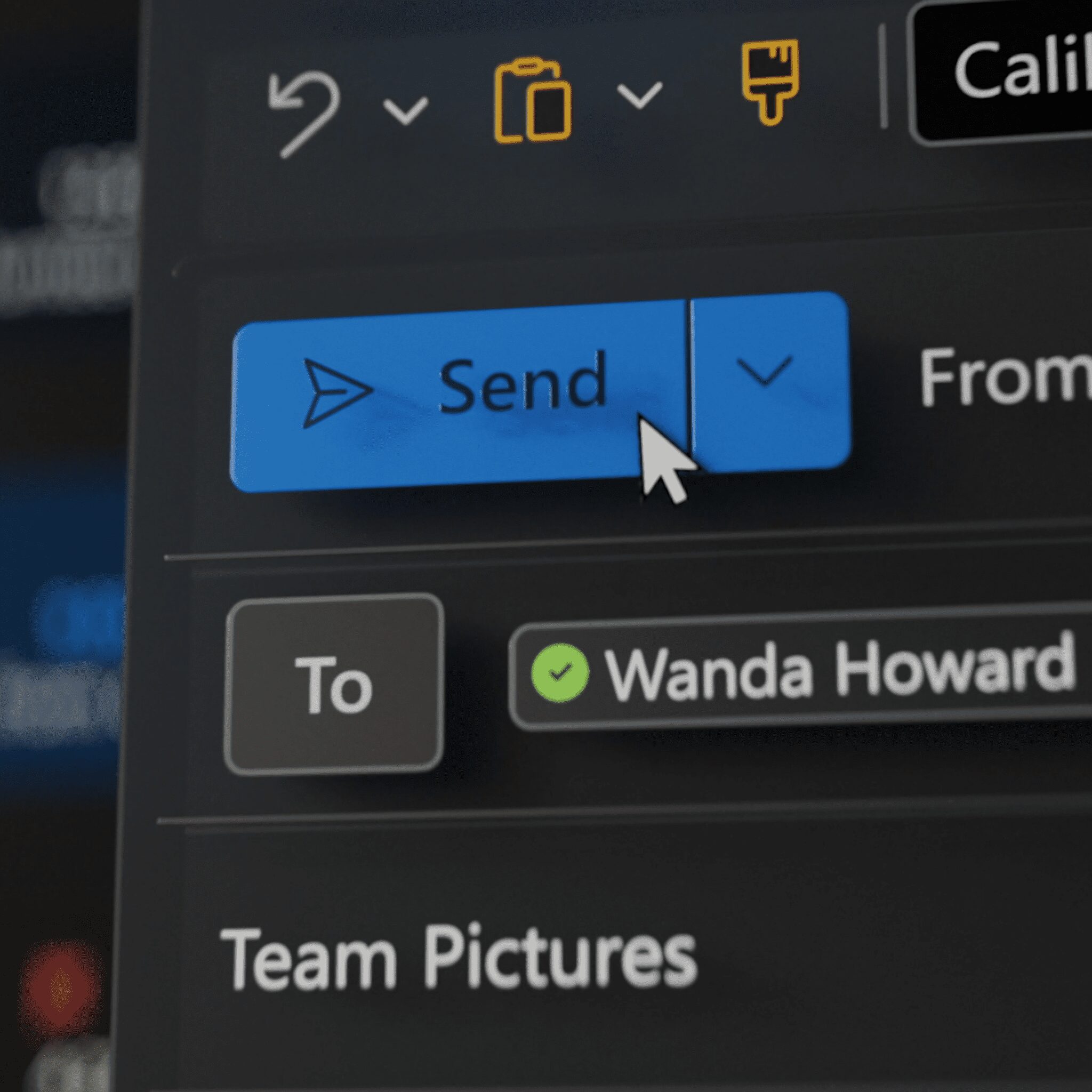

Microsoft 365 Sizzle Reels and Event Videos

Post-Production Supervisor, Motion Designer & Editor

A lot of the Microsoft 365 work lived in fast, high-energy sizzle reels built for events like Ignite. Those pieces had to move quickly, but they still needed to make complicated product stories feel watchable.

I handled post-production and motion design across a lot of that work, shaping the edit, building transitions, and figuring out how to condense a huge amount of information into something that felt sharp instead of crowded.

Introducing the new Bing in Windows

UI Production Director

This was our first serious attempt at showing AI inside Windows. We built the video in a 10-day sprint, which meant there was not much room for overthinking anything.

The main focus was Bing Chat in the taskbar, but we also used the piece to preview touch changes, Start updates, and virtual desktops. The job was to make that all feel simple enough to grasp on first watch.

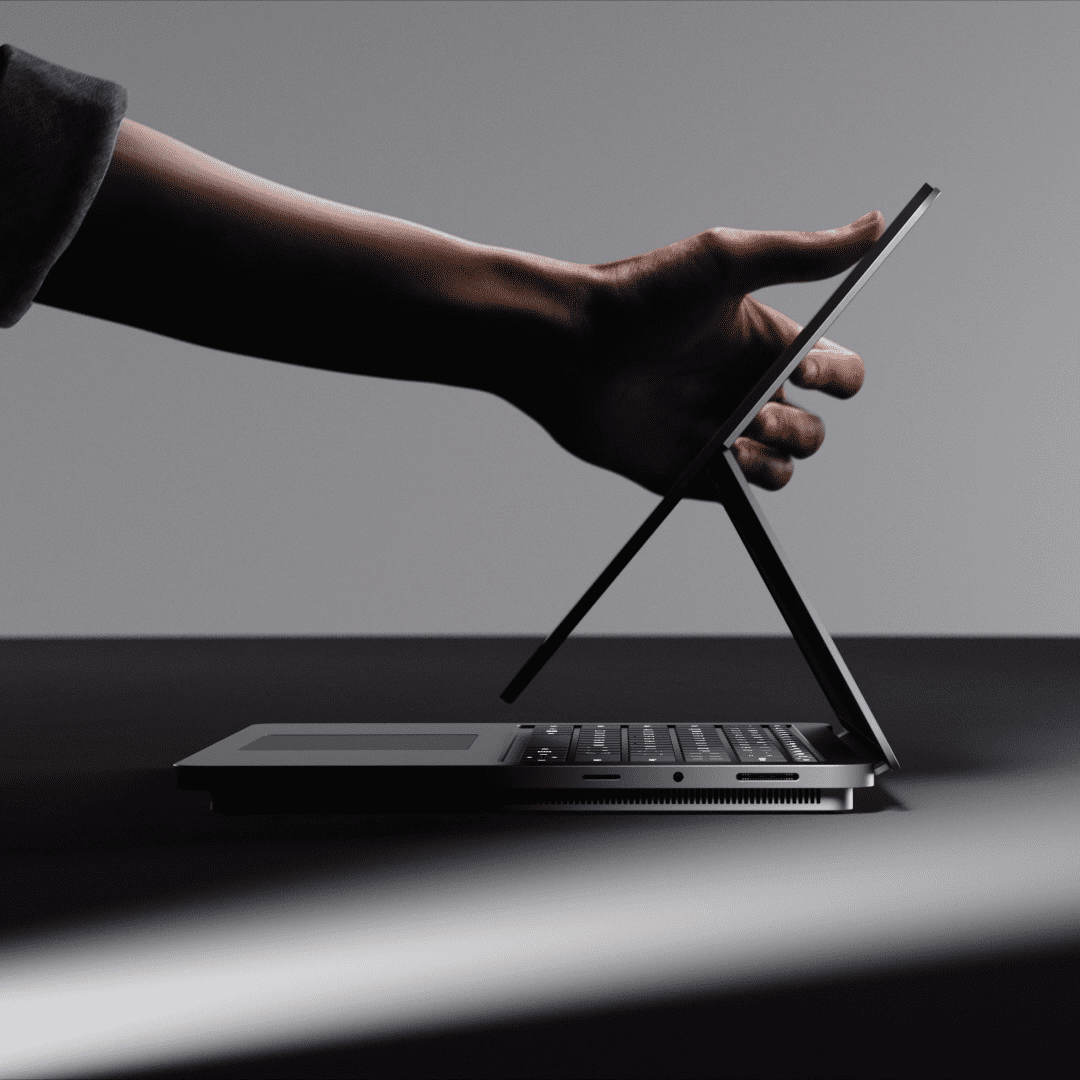

Surface Copilot+ PCs

UI Production Director

For the Surface Copilot+ PCs launch, the story had to do two things at once. It needed to sell the hardware, but it also had to make the AI features feel real and useful.

I led the UI side of that work and coordinated across design, engineering, and marketing so the feature moments stayed accurate without losing visual punch. Working with Man vs. Machine, we combined 2D animation, AI-generated art, 3D motion, and live footage into one piece that still felt cohesive.

Make AI Simple with Adobe

Director, Producer, Animator & Editor

Adobe brought me in to make a piece that explained their AI tools to marketers without turning the whole thing into jargon. That meant finding a story that felt useful first, then building the visuals around it.

I co-wrote the script, sketched the boards by hand, directed the voice-over, animated the graphics, and handled the edit. The challenge was making data-heavy workflows feel understandable and human, which is exactly the kind of problem I like.

United Way

Editor & Animator

For United Way, the work was much more personal. I edited a campaign built around real people and their stories, which meant the pacing had to stay sincere and let the right moments breathe.

I also cut a broader brandscape video that explained the organization's mission and impact. The two pieces did different jobs, but both came down to the same thing, finding the human center and not getting in its way.

Personal Project: AI Video Chatbot

This started as a side experiment to see if I could make an AI avatar feel more alive than a standard chatbot. I used ChatGPT for dialogue logic, GitHub Copilot to help stitch the code together, and Rive for the character movement.

Later I rebuilt the whole thing as a React app with Azure Cognitive Services underneath it, adding real-time voice and emotional cues. The project taught me a lot, but mostly it reminded me that you do not need to feel like a hardcore engineer to start making strange new things. You just need a reason to try.

If you want to try it, the live avatar is right below. The first response can take a few seconds while the server wakes up, then it should be ready to chat.

Personal Project: Social Video Generator

This project was a simple test with a clear goal. Could I take a text prompt and turn it into a short social video in about 30 seconds?

The tool was less about polish and more about speed. I wanted to show how AI could shrink the distance between an idea and a usable asset, especially for quick-turn content.

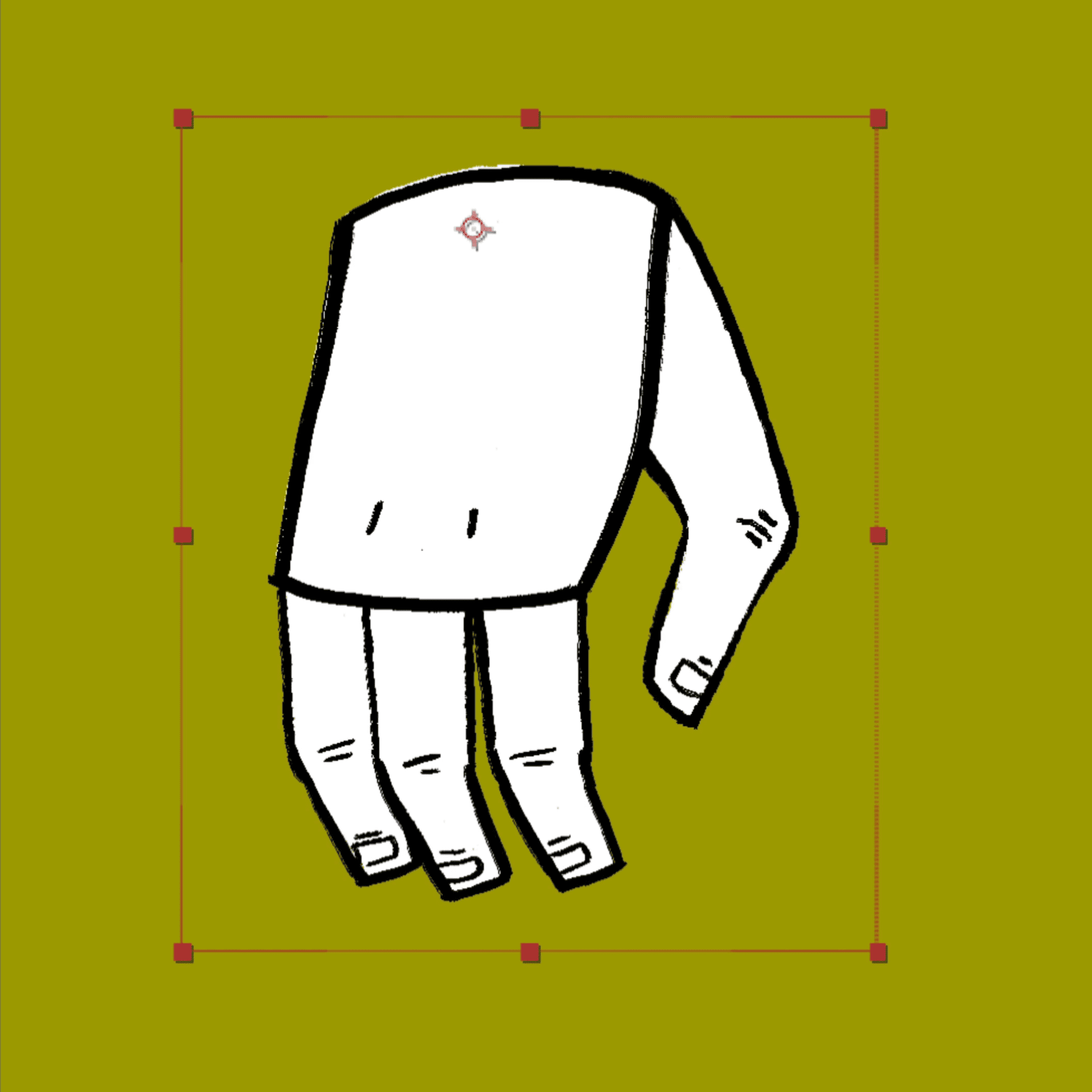

Personal Project: Translating Real-World Motion Into Design

I got interested in whether a real gesture could become the basis for motion design instead of just guessing at easing curves. So I started filming small physical actions, matching them, and studying the timing.

That turned into a simple capture-to-curve tool that converts recorded motion into editable easing for Figma, After Effects, Rive, or code. The whole point was to start from feel instead of approximation.

“The future is not AI versus humans, it’s AI and humans collaborating.” — Satya Nadella

I tend to jump in wherever the project needs help, whether that is scripting, directing, shooting, animating, or cleaning up the final edit. That range has always been part of how I work.

More recently, AI has become useful in the same practical way. I use it to transcribe footage faster, generate rough b-roll ideas, or build temporary voice tracks when the piece needs one. For me, the point is not replacing the creative work. It is clearing out some of the friction so there is more time for the story.

Selected Works